Mini REST+JSON benchmark: Python 3.5.1 vs Node.js vs C++

Some time ago at Nokia I voluntarily developed a search engine tailored for internal resources (Windows shares, intranet sites, ldap directories, etc..) - NSearch. Since then few people helped me to improve it so it became unofficial "search that simply works". However, as you can image, this was purely a side-by project so I didn't pay attention to quality of the code much (I'd love to do so, but cruel time didn't permit :/). As a result during passing months a lot of technical debt was borrowed.

Recently we came to a conclusion that it's enough. We agreed that the backend of the service is going to be first in line. Because all of it was about to be rewritten we thought that maybe it's a perfect time to evaluate other technologies. Currently it's using Python2.7 + Django + Gunicorn. We consider going to either Node.js with Express 4, Python3 with aiohttp or C++. Maybe other language would be even a better match? However, we don't program in any other languages on a daily basis...

In this post I'd like to show you results of my very simple evaluation of performance of these three technologies along with some findings.

Technical facts

I prepared two JSON objects used for two following benchmarks. The first was simple {"hello": "world!"} and the second was extracted directly from NSearch and connsisted of about 10k of characters, but I can't include it here. For the C++ I used Restbed framework and jsoncpp library, just to have anything with normal URL path support (otherwise results wouldn't be reliable at all).

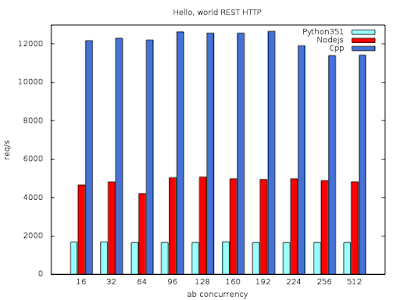

First benchmark - conclusions:

Second benchmark - conclusions:

(I excluded C++ because of convenience of filling JSON object)

Why Python is so slow and Node.js much faster?

Python is interpreted language. This is main reason why it's so slow. Why it's not the case with Node.js? Because Node.js uses V8 - JavaScript interpreter - which has built-in JS JITter. JITter means Just-In-Time compiler which can speed up execution of a program by order of magnitude.

Can Python be faster? Yes!

There's also Python interpreter with built-in JITter - PyPy. Unfortunately it doesn't support 3.5.1 version of Python yet.

Recently we came to a conclusion that it's enough. We agreed that the backend of the service is going to be first in line. Because all of it was about to be rewritten we thought that maybe it's a perfect time to evaluate other technologies. Currently it's using Python2.7 + Django + Gunicorn. We consider going to either Node.js with Express 4, Python3 with aiohttp or C++. Maybe other language would be even a better match? However, we don't program in any other languages on a daily basis...

In this post I'd like to show you results of my very simple evaluation of performance of these three technologies along with some findings.

Technical facts

- everything was tested on machine with Intel E5-2680 v3 CPU and 192 GBs of RAM running on RHEL-7.1 OS,

- applications were run from under Docker containers, so there might be some overhead introduced by libcontainer, libnetwork, etc.

- ab was used for benchmarking with 1M of requests with different concurrency settings

- max timeout was set to 1 second

I prepared two JSON objects used for two following benchmarks. The first was simple {"hello": "world!"} and the second was extracted directly from NSearch and connsisted of about 10k of characters, but I can't include it here. For the C++ I used Restbed framework and jsoncpp library, just to have anything with normal URL path support (otherwise results wouldn't be reliable at all).

First benchmark - conclusions:

- all solutions are asynchronous and event based and they're using event loops. otherwise it wouldn't be the case that 512 concurrent users can be served with max timeout set to 1 second

- starting from 192 concurrent users amount of requests per second starts to decrease slightly

- Python is more than two times slower than Node.js

- C++ is more than two times faster than Node.js

Second benchmark - conclusions:

(I excluded C++ because of convenience of filling JSON object)

- the gap between Python and Node.js is much smaller when bigger JSON is in question

- apparently starting to handle a request in Python is slow, at least compared to Node.js

- replacing json.dumps with ujson.dumps increases Python performance by about 5%

- Node.js performance drops drastically when bigger JSON is used - from over 5k of requests per second to about 1700!

- Python's drop is not that drastic - from about 1700 to 1200 requests per second. It means that when the handling is ongoing, Python is not slowing down.

Why Python is so slow and Node.js much faster?

Python is interpreted language. This is main reason why it's so slow. Why it's not the case with Node.js? Because Node.js uses V8 - JavaScript interpreter - which has built-in JS JITter. JITter means Just-In-Time compiler which can speed up execution of a program by order of magnitude.

Can Python be faster? Yes!

There's also Python interpreter with built-in JITter - PyPy. Unfortunately it doesn't support 3.5.1 version of Python yet.